[ad_1]

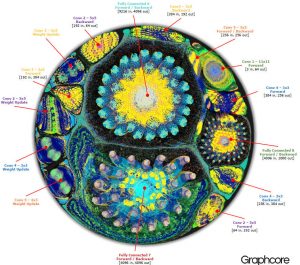

The false colour image has been created by the firm’s graph compiler software, which untangles neural networks as a prelude to executing them on Graphcore’s as-yet undisclosed hardware.

“In software, we explode the full graph, with all the vertices and all edges in between. Then we minimise all connections, which is why there are clumps in the photo, and map it onto our machine,” said Toon.

The terms graph, vertices and edges here come from graph theory, which is used as a tool handle the data needed to implement machine learning techniques such as neural networks and deep networks.

Respectively, they describe a two-dimensional network (graph) consisting of the nodes and inter-node communication channels. “These computational graphs are made up of vertices – think neurons – for the compute elements, connected by edges – think synapses,” said Toon.

Its primary intellectual property is for a processor – dubbed IPU for intelligent processor unit – that executes complex high-dimensional graphs efficiently.

The firm is saying little about the hardware, except that: “It emphasises massively parallel, low-precision floating-point compute and provides much higher compute density than other solutions”, according to GraphCore.

Toon claims: “Across a suite of machine learning problems, compared with Nvidia Pascal-generation GPUs, it is ten times to a hundred times faster to train or deploy at same power consumption” – explaining that although GPUs are used to for machine learning, their architecture is biased towards rendering 2D images from 3D data, while an intelligent machine is more likely to be re-constructing a 3D environment from two camera images and knowledge of likely object in the scene – in a self-driving car, for example.

The IPU is said to hold a complete machine learning model inside the processor and, in another claim explaining the algorithm speed-up, to have 100x more memory bandwidth than other solutions.

The same hardware can be used for both training and using machine learning, he added: “Learning and execution are effectively the same compute problem – energy minimisation across a graph.”

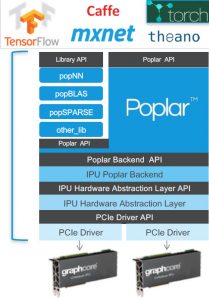

Graphcore will be selling IPU-based machine learning accelerator PCIe cards for servers, and a complete learning machine, available this year and called the IPU-Appliance, rather than selling silicon or silicon intellectual property,

The graph compiler software, called Poplar, is a C++ based scalable programming framework, aimed at IPU-accelerated servers and IPU accelerated server clusters, which abstracts the graph-based machine learning development process from the hardware.

The graph compiler software, called Poplar, is a C++ based scalable programming framework, aimed at IPU-accelerated servers and IPU accelerated server clusters, which abstracts the graph-based machine learning development process from the hardware.

It includes graph libraries for machine learning, and builds up an intermediate representation of the computational graph to be scheduled and deployed across one or many IPU devices.

The compiler can also display this computational graph, so that an application written at the level of a machine learning framework reveals an image of the computational graph which runs on the IPU – which is where the image above came from, showing how AlexNet might be implemented.

Graphcore spun-out of XMOS in the middle of last year with £30m from investors including Samsung, Bosch and Amadeus. It currently has over 40 employees.

Toon was brought into XMOS by its venture capitalists in 2012 to grow the company and subsequently convinced the investors to look at machine learning inside XMOS.

Last year, XMOS spun-out Graphcore as an independent company, receiving Graphcore shares in exchange. Toon remains non-executive chairman of XMOS.

[ad_2]

Source link